A Three-Step Method to Identify Key Nursing Indicators to Evaluate the Impact of Integrated Bedside Terminals on Patient Care: A Collaboration Between Clinicians and Researchers

By Félix Prophète,* DÉPA

Administrative Process Specialist, McGill Nursing Collaborative, Jewish General Hospital, CIUSSS West-Central Montreal

Ève Bourbeau-Allard, MSI, MA

Research Librarian, Centre for Nursing Research, Jewish General Hospital, CIUSSS West-Central Montreal

Marc-André Reid, RN, MSc(c)

Chief Nursing Information Officer, Jewish General Hospital, CIUSSS West-Central Montreal

Isabelle Caron, MScN

Associate Director of Nursing, CIUSSS West-Central Montreal

Dr. Christina Clausen, RN, PhD

Coordinator, McGill Nursing Collaborative, CIUSSS West-Central Montreal; Faculty Lecturer, Ingram School of Nursing, McGill University; Course Director, Office of Interprofessional Education, McGill University

Dr. Margaret Purden, RN, PhD

Scientific Director, Centre for Nursing Research, Jewish General Hospital, CIUSSS West-Central Montreal; Associate Professor, Ingram School of Nursing, McGill University; Director, Office of Interprofessional Education, McGill University

Citation: Prophète, F., Bourbeau-Allard, E., Reid, M., Caron, I., Clausen, C. & Purden, M. (2022). A Three-Step Method to Identify Key Nursing Indicators to Evaluate the Impact of Integrated Bedside Terminals on Patient Care: A Collaboration Between Clinicians and Researchers. Canadian Journal of Nursing Informatics, 17(3-4). https://cjni.net/journal/?p=10460

Abstract

Many healthcare settings have implemented digital health platforms, hoping to remove common workflow barriers, reduce the incidence of adverse effects, and increase the quality and safety of care. However, the impact of health information technology (HIT) in clinical settings is not well understood, and inconsistent description of how indicators are selected to measure HIT’s effects on care raises questions about the quality and sensitivity of such measurements.

This paper describes the process of identifying and validating key indicators to evaluate the effects of digital vital signs monitors on patient care in a tertiary care hospital’s medical and surgical units. We developed and applied a three-step methodology. First, we conducted a comprehensive literature review, retaining 25 articles from which we extracted 65 potential indicators. We then classified the indicators according to 1) Operational versus clinical levels, and 2) Donabedian’s categories of structure, process, and outcomes. Finally, through a series of roundtable discussions with clinical stakeholders, we applied MacDonald’s practice-based criteria to select and validate 12 indicators as being relevant for the context. This collaborative validation process can be replicated at other healthcare facilities for selecting sensitive and practicable indicators.

Keywords

Health information technology, Vital signs monitors, Point-of-care systems, Validation process, Indicators, Clinician-researcher collaboration

Background

Changes in a patient’s physiology or vital signs are usually the first parameters to signal the patient’s clinical decline (Alam et al., 2014; Kramer et al., 2019). Patients at increased risk of clinical deterioration often require frequent assessment of their vital signs (Helfand et al., 2016) because early detection of any alteration in these parameters alerts healthcare professionals to provide appropriate medical or surgical interventions in a timely manner (Alam et al., 2014; Brown et al., 2014; Cho et al., 2020; Murphy et al., 2018). However, error or delay in the detection of alterations may result in a rapid decline of the patient’s health, and can further complicate the situation by giving a false assessment of the patient’s condition (Brown et al., 2014; Jones et al., 2011). It is thus essential to measure vital signs accurately and in a timely manner.

Technological devices now allow continuous monitoring that reveals deterioration in a patient’s health parameters at an early stage (Prgomet et al., 2016). Traditionally, measuring patient outcomes (e.g., pressure ulcer, pain, etc.) or vital parameters (e.g., blood pressure) is performed manually by health practitioners, often nurses, which is time consuming. In a study of clinical staff perceptions of vital sign monitoring practices on general wards, Prgomet and colleagues (2016) noted that manual assessments were done intermittently, which poses the risk of missing the clinical deterioration of a patient. The use of health information technology (HIT) seems to offer a more accurate and timelier alternative in measuring vital signs and other health parameters, such as falls and pressure ulcers.

Consequently, the past two decades have seen exponential growth in the design and implementation of HIT to improve patient assessment and outcome data. Technology enables the automation of vital signs recording, provides an early detection of alterations in vital signs (Mau et al., 2019; Smith et al., 2014), is associated with a decrease in the occurrence of missed nursing care (Mau et al., 2019; Piscotty & Kalisch, 2014; Smith et al., 2009), may reduce the burden of copying information manually, and frees up nursing time for other patient-related tasks (Liu & Walsh, 2018; Wakefield, 2014). A recent systematic review on the impact of health information technology on nurses’ time showed that HIT enables nurses to engage in higher value-added care activities, spending more time providing direct patient care (Moore et al., 2020). HIT devices have been identified as important operational means for storing, processing, analyzing and transmitting health information to ensure efficient and better-quality health care (Jardim, 2013).

However, the problem of measuring patient status appropriately is far from solved if digital monitors do not track the health quality indicators deemed relevant in a particular clinical context or assess the required health parameters to prevent complications and adjust treatment (such as pain, skin integrity, ingest-excreta assessment, level of consciousness). We refer to an indicator as a “documentable or measurable piece of information” (MacDonald, 2011, p.1), qualitative or quantitative in nature collected to assist care providers with quality monitoring and improvement, benchmarking, and prioritization (Mainz, 2003). Because there is a plethora of health quality indicators in the literature, it is important to ensure that the set of indicators used in a health care facility is appropriate and relevant. It should align with management’s data collection priorities. At this point, and to the best of our knowledge, the literature offers no consistent methodology that specifically guides the selection of health quality indicators to assess the impact of digital monitors on nursing care and, ultimately, on patient outcomes.

This paper describes a rigorous and replicable methodology for selecting key indicators to evaluate the impact of health information technology (in particular, digital vital signs monitors / integrated bedside terminals) on nursing care. It then presents an illustrative case, using the context of a tertiary care hospital.

The three-step methodology

Table 1 summarizes the three-step methodology described in the sections below. This process is collaborative and involves nursing researchers, clinical stakeholders from nursing leadership, and, where possible, a health librarian.

Table 1: Three-step methodology summary

Step one: Review of literature

According to Mainz (2003), health quality indicators are selected based on the strength of their scientific evidence for predicting outcomes, and that evidence can be based on the scientific literature or on the rigorous deliberation of an expert panel of health professionals. To identify the range of health quality indicators already published in the literature, our process began with a scoping review. The purpose of the scoping review was to map out the literature (Levac et al., 2010) on health care quality indicators assessing the impact of information technology on nursing care at the bedside, and identify major sources of evidence available from which to extract existing indicators. It also provides an overview and clarification of the topic in question, its extent, components, characteristics, related key concepts, and gaps in the research (Levac et al., 2010; Petit & Cambon, 2016; Whittemore & Knafl, 2005). We found that consultation with clinical stakeholders at a later stage of the process can supplement the data obtained from the scoping review with practical considerations, especially where gaps have been identified in the literature. Because the literature on quality indicators is vast and can easily become confusing, this scoping review serves as a useful starting point, providing a better understanding on which aspects of the topic to focus.

Consistent with the Arksey and O’Malley (2005) and Levac et al (2010) frameworks, this scoping review encompasses the following stages: articulating the research question to guide the literature search, preferably in consultation with clinical stakeholders; identifying and selecting relevant studies; charting and collating relevant information from the selected studies on the given topic; and summarizing and reporting the results for further consultation (Arksey & O’Malley, 2005; Levac et al., 2010; Xiao et al., 2017). Relevant papers were selected based on their authenticity/accuracy, methodological quality, informational value, and representativeness (Whittemore & Knafl, 2005). The rigour of this literature search is essential; the quality of the indicators that will be retained in the subsequent stages of the selection process relies largely on the methodological quality of the studies from which the indicators were extracted. Obtaining the assistance of a health research librarian can enhance the thoroughness of the literature review process (Koffel, 2015; Rethlefsen et al., 2015).

The search strategy for the review comprises three steps, in accordance with the JBI Manual for Evidence Synthesis (Peters et al., 2020). An initial, exploratory search aims at discerning important concepts, keywords, and index terms, as well as key studies. Once this preliminary review has sufficiently clarified the topic, a comprehensive and more oriented database search is conducted to identify more relevant papers. Finally, the reference lists of included papers are surveyed to locate additional sources. As such, the literature review aims at clarifying the topic of interest, and then deepening the state of knowledge around this topic.

Step two: Identification and classification of the indicators

Following the selection of studies meeting the inclusion criteria, indicators are extracted from the body of literature until they become redundant across these studies. They are then categorized in a logical manner to make their listing more understandable to all stakeholders. Preferably, a cross-category framework consistent with complex health care delivery systems is used to ensure that the set of indicators selected is reasonably diverse and covers all relevant health care categories (Jennings, 1999). We use a combined framework based on Donabedian’s quality of care model (Donabedian, 1974) and Mestrom et al’s categorization system that classifies indicators as either operational or clinical (Mestrom et al., 2019).

Donabedian’s tridimensional model of structure, process, and outcomes (Donabedian, 1974) remains a benchmark in health services research. It has been used repeatedly in health care quality evaluation (Bermudez-Camps et al., 2021; Labella et al., 2021; Martinez et al., 2018; McCance, 2003). For example, in a recent systematic review of what constitutes a good quality set of indicators, the authors found that the Donabedian framework was used in 42 out of 62 studies identified (Schang et al., 2021). Moreover, it is a proven approach in successful implementation of a service innovation (Binder, 2021; Gardner et al., 2014). Structure refers to all the factors that affect the context in which care is delivered (e.g. facilities, equipment, human resources); processes refer to all the activities that make health care possible, whether they relate to technical or interpersonal processes (e.g. diagnoses, treatments, prevention care); and outcomes refer to all the effects or changes on health status of patients and populations, quality of life, satisfaction, and others (Donabedian, 1974; Mainz, 2003; McCance, 2003).

As Donabedian’s model was found to be suboptimal in incorporating patient medical history and factors related to the health care profession itself, which are important in assessing quality of care (Coyle & Battles, 1999), we used an additional categorization to fill this gap. The selected indicators are classified as relating to either the operational or clinical aspects of the hospital’s care units. The operational level refers to the usage of the automated system, whereas the clinical level refers to the impact of a variable (in this case, the HIT use) on patient outcomes, such as length of hospital stay, incidence of pressure ulcers, and falls (Mestrom et al., 2019).

After completing the classification work, researchers can seek initial input of clinical stakeholders to check that indicators extracted from the literature appear acceptable for the setting, though thorough validation will be completed at the next step.

Step three: Validation of the selected indicators

This final step involved a series of discussions and deliberations between the clinical stakeholders, who will ultimately use the indicators, and the researchers, who conducted the literature review and classified the indicators. Clinical stakeholders in leadership positions from the health care setting join the researchers to discuss the rationale and relevance of each indicator according to local needs. Based on previous studies, involvement of clinical stakeholders with decision-making power ensures the use and the relevance of the indicators selected for a particular context (Bryson & Patton, 2010; Patton, 2008). The entire team meets for a series of one-hour discussion sessions (e.g., 3 to 4) over a certain period of time as needed, or until they have selected all the indicators required for the health care setting.

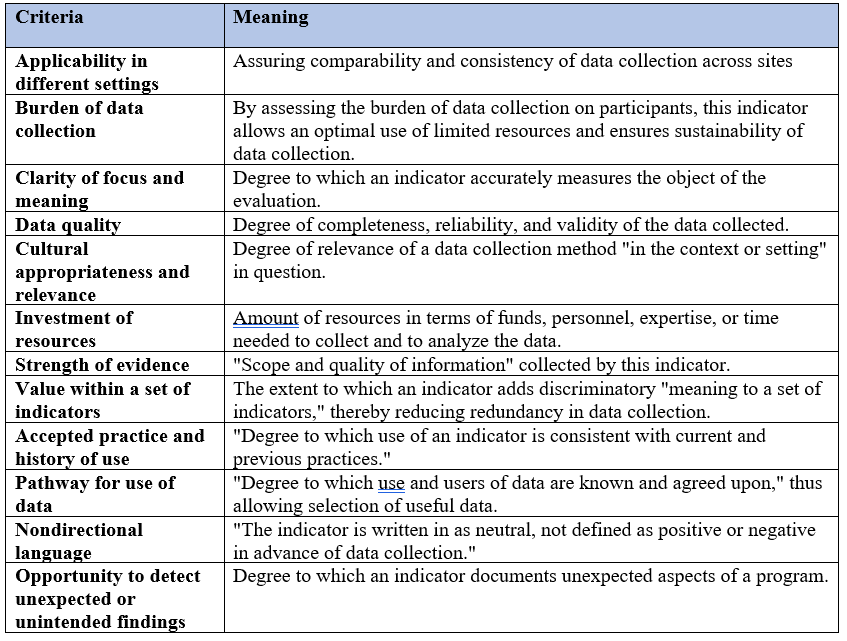

Given that knowledge and perceptions of the indicators may vary among those who participate in deliberative discussions, it is critical to ensure a common language among clinical stakeholders and researchers when accepting or rejecting certain indicators. To guide this series of discussions, MacDonald’s list of Criteria for Selection of High-Performing Indicators (MacDonald, 2011) is used to assist in the identification and selection of high performance indicators. It is a robust, yet simple tool that facilitates deliberative discussions among many stakeholders with varying levels of knowledge and perceptions of evaluation. Specifically, MacDonald’s criteria have three main advantages: 1) they offer the same understanding of, and vocabulary about, the indicators to all participants; 2) they help participants stay connected to the evaluation needs; and 3) they link data collection activities to actual intended uses of findings (MacDonald, 2011).

MacDonald’s tool has 12 criteria for selecting high-performing indicators(MacDonald, 2011) as presented in Table 2 below.

Table 2: MacDonald’s criteria (MacDonald, 2011)

During discussions, it is anticipated that several pre-selected indicators are rejected while others are included in accordance with MacDonald’s criteria and the clinical stakeholders’ needs. Following the discussion with stakeholders, the research team may need to return to the literature to confirm the choice of indicators or seek out new indicators.

Methods

This section describes a case study to illustrate our three-step methodology. The objective was to select key indicators to evaluate the impact of integrated bedside terminals in the medical and surgical units of a tertiary care university hospital in the urban center of Montreal, Canada.

This hospital has embarked on a new paradigm of nursing care involving a shift towards digital health. About 400 digital vital signs monitors with the ability to collect a wide range of clinical data were installed throughout the medical and surgical division, including in nine acute care and in most intensive care units. Integrated bedside terminals (IBT) are technological devices installed at the bedside to capture patient health information and connected to a central station to display the patient profile. Serving as a connectivity hub, they are highly interoperable as they allow information on many health parameters to be shared with other devices via Bluetooth and Wi-Fi. This permits continuous, live monitoring of the patient’s condition (Iroju et al., 2013) irrespective of the nurse’s location on the unit. The terminals collect data on vital signs, standard evaluations (e.g., pain level, Braden score, risk of falling), an Early Warning Score of patient deterioration (a composite of several indicators of patient health status that alerts healthcare professionals when a predetermined threshold is reached) (Helfand et al., 2016), frequency of turning and positioning (based on patches on the patient’s chest), nutritional and activity status (derived from height, weight, amount of food consumed, number of hours walking), and additional parameters deemed necessary for each specialty or unit.

As an integral part of the nursing care at this university hospital, the health information technologies are intended to alleviate the documentation processes, allowing nurses to spend more time at the patients’ bedside to provide care, and to reduce the incidence of adverse events and increase quality and safety of care. Along with the push to integrate technology into nursing workflows, there was an equal effort to track the impact of technology to see if it achieves its intended goals. Therefore, there was a need to understand more specifically how IBTs optimize nursing care and the extent to which they impact patient outcomes at the bedside, since many patient health outcomes (e.g., pain, falls) fall within the nursing mandate. We developed the three-step methodology described above in response to this data collection and assessment need.

Case Study

The process for selecting and validating indicators to assess the IBT at the given hospital is described in the three-step methodology above.

Step one: Review of the literature process

We conducted a scoping review to guide the selection of indicators for our specific hospital setting. We referred to a few criteria that have been frequently used in the literature to select relevant studies related to a specific field, notably their “authenticity, methodological quality, informational value, and representativeness of available primary sources” (Whittemore & Knafl, 2005, p. 550). Studies or papers that were anonymous, undated, poorly structured, unpublished, or not related to the considered themes were also excluded.

Initially, a preliminary search was conducted in English and French in order to find the appropriate concepts used to describe the relationship between health information technology and better nursing care. Of the 120 articles found during this exploratory search, 20 met the inclusion criteria, five of which provided indicators that were later retained for discussion with clinical stakeholders. This first search provided additional conceptual clarification (Davis et al., 2009), helping to focus on appropriate keywords to guide a more extensive database search. The more frequent terms and concepts found were: Vital signs machines or monitors, Connected health, Physiologic monitoring, Point-of-care systems, Digital health, Healthcare information technology or system, Interoperability, Documenting at bedside, Technology at the bedside, Early warning scoring system, Value-based care, and Missed care.

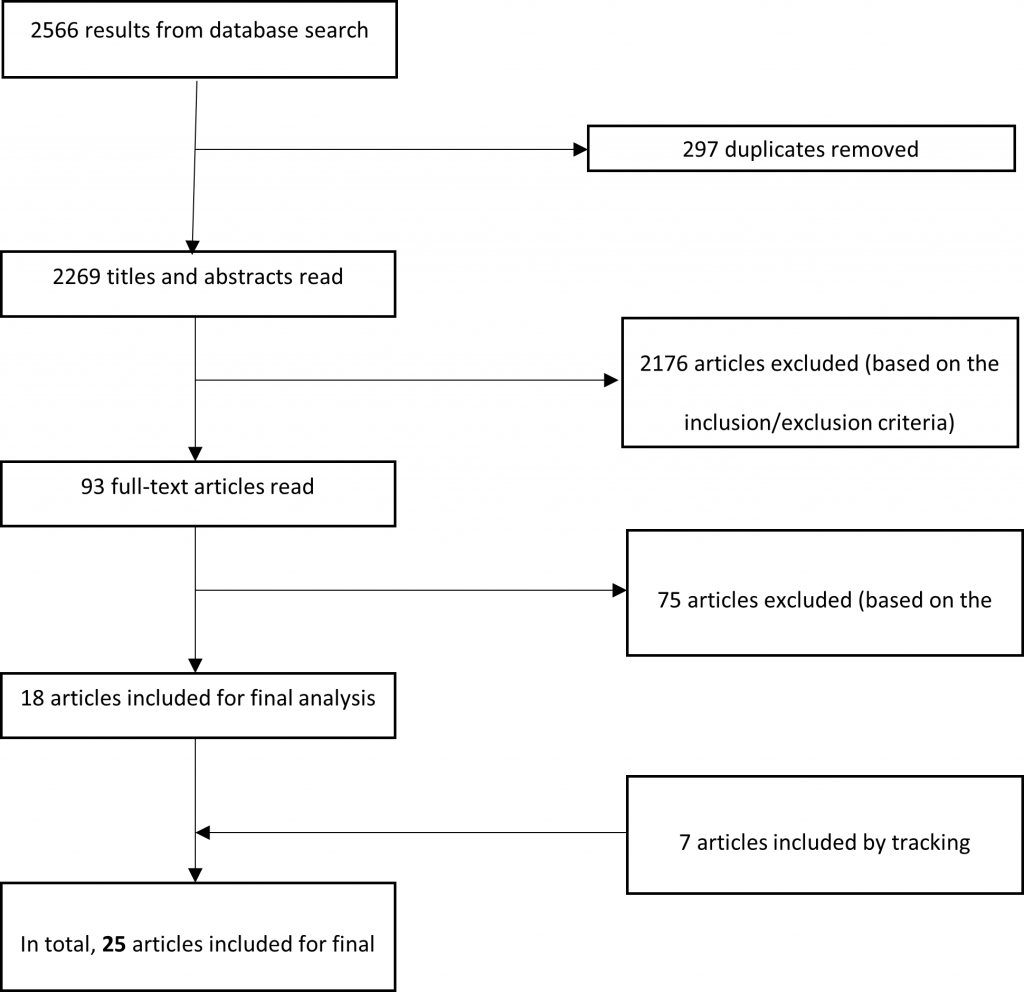

These concepts were then used in a more comprehensive literature search to look for studies published between 2005 and 2020 in the following electronic databases: MEDLINE (Ovid and PubMed), Embase, and CINAHL, with the support of a librarian specialized in the health sciences. This literature search was completed by tracking the bibliographical references of certain articles and documents deemed relevant. A total of 2566 articles were identified, including the studies identified in the preliminary literature search and in-depth database search: 297 duplicates were removed, and 2251 articles were excluded on the basis of the inclusion/exclusion criteria specified earlier. A total of 25 articles (of which seven were found by tracking citations) were included for final analysis (Figure 1).

Figure 1: Literature Search Process and Results

Step two: Identification and classification of the indicators

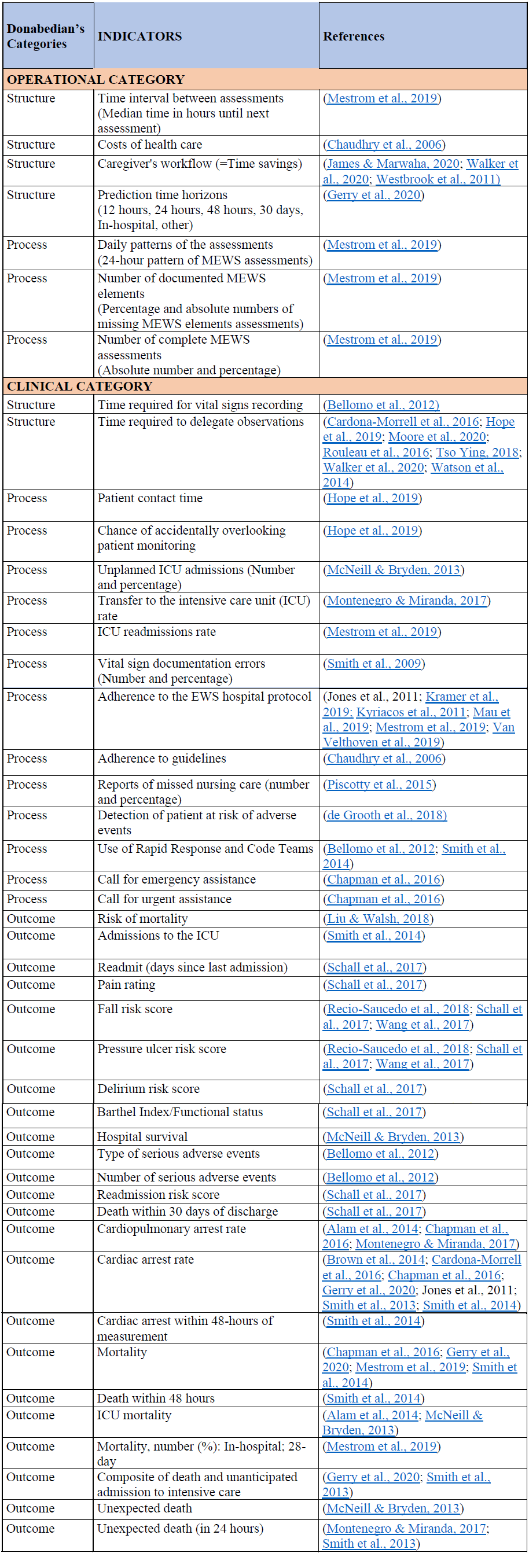

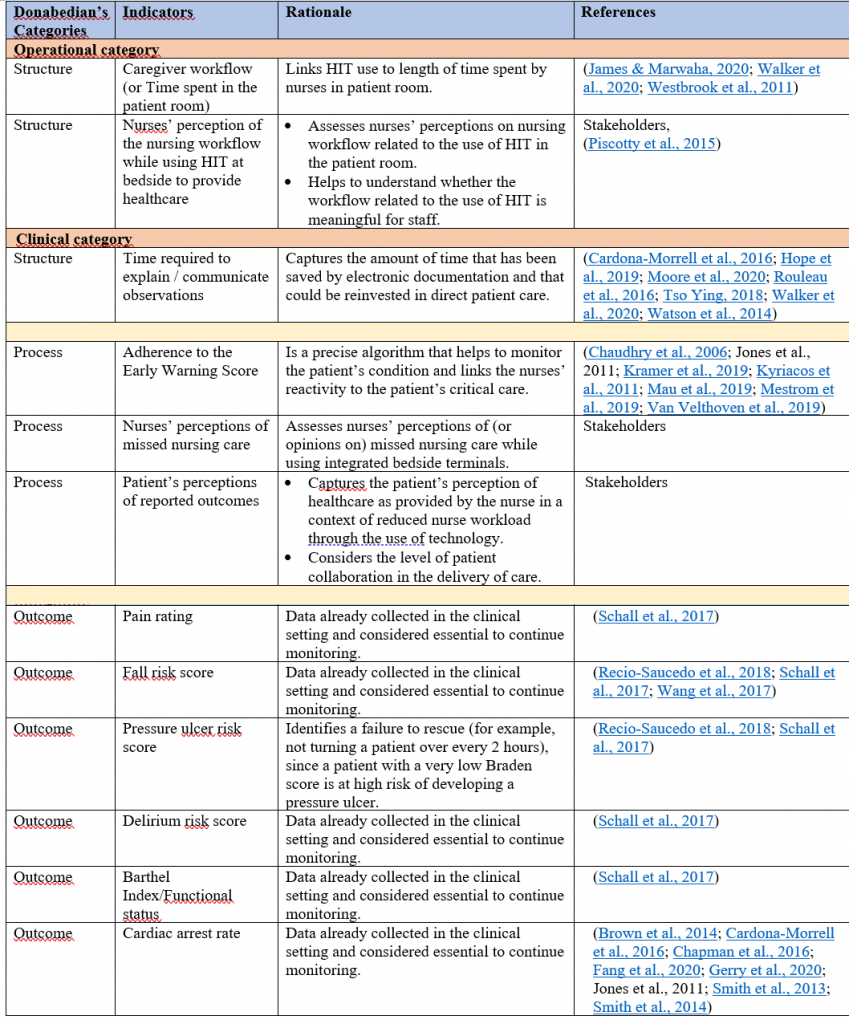

The selected articles were uploaded to an EndNote file and summarized in an Excel database that presented the objectives, methodological issues, key findings, and implications of the studies. From these articles, we extracted 65 indicators that appeared to be the most reliable for assessing the impact of information technology on nursing care and patient outcomes in the hospital setting. We used a combined framework derived from Donabedian’s tridimensional model and Mestrom et al’s operational and clinical categories to classify the 65 indicators (Donabedian, 1974; Mestrom et al., 2019).

An initial meeting was held between the clinical stakeholders leading this initiative (the Chief Digital Health Nursing Officer and an Associate Director of Nursing) and the researchers. The 65 indicators previously retrieved from the literature were reviewed jointly by the researchers and the clinical stakeholders according to their potential relevance to this context. Stakeholders and researchers agreed on the following criteria for evaluating the 65 indicators: indicators are measurable, direct, and precise, and practical, in the sense that they can be collected in a timely fashion without placing a heavy burden on the nurses. A total of 45 indicators were retained at this stage, which are listed in Table 3. These HIT-related indicators have the potential to assess health outcomes relevant to the stakeholders, namely missed nursing care (defined as delayed or incomplete care (Kalankova et al., 2020) and nursing workflow (referring to a sequence of tasks, grouped chronologically into processes, and carried out by a set of people to achieve a given goal (Cain & Haque, 2008; Zheng et al., 2010)).

Table 3: List of 45 indicators considered potentially acceptable in the clinical context

Step three: Validation of the selected indicators

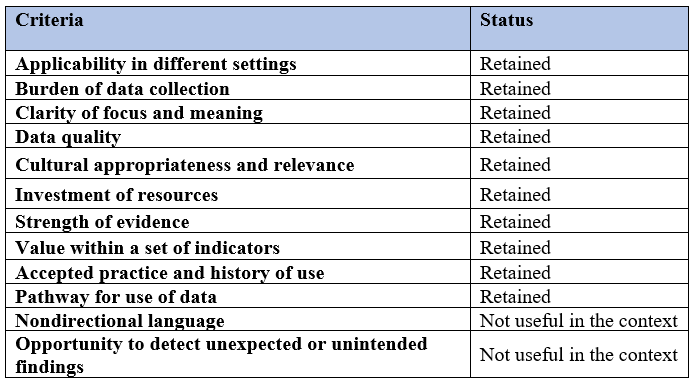

Once the research team and clinical stakeholders refined the list of potential indicators, the researchers eliminated any redundancy in the indicators. This was achieved by applying 10 criteria out of MacDonald’s 12 Criteria for Selection of High-Performing Indicators (MacDonald, 2011) deemed relevant to the selection process (Table 4). The remaining two were considered less relevant because the two-way selection process (researchers and clinical stakeholders) had already addressed the issues to which these criteria apply. This step resulted in the retention of 12 high-performing indicators that require low data collection effort and are therefore well suited to a high workload environment.

Table 4: MacDonald’s criteria retained

A new discussion round was conducted with the research team and the clinical stakeholders to ensure that the parsimonious set of 12 indicators were appropriate for the medical and surgical units. In order to facilitate the final validation, it was jointly decided to give higher weighting to some of MacDonald’s criteria. For example,Cultural appropriateness and relevance to the hospital context was felt to be crucial, even though it would require greater staff effort to collect the data. In this final round, stakeholders replaced three indicators identified in the literature with three new indicators. Time interval between assessments and Prediction time horizons were removed because clinicians were not interested in determining the frequency with which HITs perform patient assessment, as this is based on predefined information on the health parameters. Reports of missed nursing care was removed due to lack of details on who reports the missed nurse care. The new indicators were chosen to qualitatively assess staff and patients’ perceptions of the healthcare provided: Patient perceptions of reported outcomes; Nurses’ perceptions of workflows; and Nurses’ perceptions of missed nursing care related to the use of the digital monitors. The clinical stakeholders based the choice of these three indicators on a Time in Motion study previously conducted at the same hospital (Caron et al., 2021) which supported the relevance of these indicators in this healthcare setting.

Results

A total of 12 indicators were ultimately selected by the clinical stakeholders and researchers, as presented in Table 5: three at the structural category, three at the process level, and six outcome indicators. Only two among the structural indicators are operational; the remaining 10 indicators are clinical. Nine indicators were selected jointly by the stakeholders and the researchers; however three indicators were specifically proposed by the clinical stakeholders to meet the needs of their healthcare setting.

Table 5: Selected indicators

Discussion

Health information technology has been introduced to alleviate the administrative burden of monitoring patient status; however, the question remains if the introduction of HIT actually translates into improved nursing care. The literature to date has yet to identify a clear process for selecting indicators to evaluate the impact of HIT on nursing practice and quality of patient care. This paper describes a three-step methodology replicable in other similar facilities with comparable needs assessments. It suggests how the selection of a sensitive set of indicators relevant to a particular health care facility is best done through an evidence-based process involving clinical stakeholders and researchers.

Our results point to the benefits of involving stakeholders and researchers in the indicator selection process. To our knowledge, this article is the first in the literature to describe the collaboration between clinical stakeholders and researchers in a process of selecting indicators to assess the effects of HIT on nursing practice. The clinicians/researchers partnership was successful in that the researchers ensured the rigorous and scientific selection of the indicators, whereas the input of the clinical stakeholders ensured that the selected indicators were directly relevant to practice. The set of indicators contains many of those needed by the clinicians to assess the impact of HIT on nursing practice and patient care outcomes in their surgical and medical units. Finding such a parsimonious set of indicators that meets clinicians’ needs outlined the benefits of a collaborative effort between clinicians and researchers, as supported by many authors (Bryson & Patton, 2010; Patton, 2008). As well, according to Montenegro and Miranda (2017), it is more appropriate for each institution to conduct its own validation process to ensure that specificities have been taken into account.

Another important consideration is that the step-by-step methodology described in this paper could serve as a template for similar healthcare facilities seeking to select their own set of HIT-related indicators. In the case study described in this paper, the clinical stakeholders and research team used a scientifically robust approach that resulted in the selection of high-performing and useful indicators. In particular, this methodology included a scoping review conducted with the help of a health sciences librarian. The review clarified the key concepts to be prioritized, delineated the available evidence, and generated a broad set of articles pertinent to the topic from which to extract potential indicators.

Furthermore, to select a valid set of indicators, an appropriate framework is essential, as it will ultimately determine the quality of the indicators retained (Schang et al., 2021). In this regard, the tools used to categorize and refine the indicators must provide a sound and practical scientific basis. For example, Donabedian’s three-dimensional framework is widely used in health care administration and health care services research. The addition of a categorization scheme with respect to clinical and operational components complements the three-level aspects of care provision (i.e., structure, process, and outcomes) provided by Donabedian. Moreover, MacDonald’s indicator selection criteria provided a guide to selecting high performance indicators that is supported by the health care assessment literature (Bukovszki et al., 2019; McKool et al., 2020).

In this case study, a total of 12 indicators were selected, of which 50% were outcome indicators, 25% structural indicators, and 25% procedural indicators. The low percentage of structural indicators compared to the outcome indicators is consistent with previous studies that have shown this type of imbalance, since many structural indicators are already incorporated into hospital accreditation standards (Copnell et al., 2009) and do not need to be measured independently. Additionally, the greater percentage of outcome indicators could be explained by the fact that outcome indicators are often considered the most credible indicators for measuring quality of care (Donabedian, 2005). Moreover, 80% of the selected indicators are clinical compared to 20% (two indicators) at the operational level. This imbalance in favor of clinical indicators reflects the interest in measuring the impact of HIT on nursing practice at the patient’s bedside.

Although we concluded that the 12 indicators selected in the case study are the best set of indicators for our institution to measure the impact of HIT on nursing practice at the bedside, some cautionary remarks must be noted. The indicators do not measure all aspects of nursing care related to HIT. For example, they do not capture nurse-patient interactions. A recent integrative systematic review examining technology in relation to the quality of nurses’ work in acute care revealed that there is little understanding of how HIT has contributed to the quality of nurses’ work, including nurses’ cognitive and emotional dimensions (Redley et al., 2020). On the other hand, a study carried out in a fully digital hospital in Ontario, Canada, on the use of smartphones transmitting patient health data from integrated bedside terminals directly to the nursing staff found that smartphone technology devices enable better time management. Specifically, these devices reduced unnecessary interruptions, improved patient safety by sending notifications to nurses (until one of them is notified), and enhanced nurse-patient interactions, allowing timely personalized communication between patient and nurse (Burkoski et al., 2019). Although smartphones were not the technology under study in our hospital centre, this provides insight into the benefits of bedside technology to improve nurse-patient interactions. Therefore, there is a need to identify or develop indicators that assess psychosocial interactions between patients and nurses in the context of integrated bedside terminals.

Another consideration is that the use of information technology at the bedside does not necessarily translate into immediate time savings for nurses. Prgomet et al. (2016) reported that the successful implementation of these technologies requires training and extensive interdisciplinary communication among staff. Workflow is likely to gradually improve as clinicians become accustomed to these technologies (Walker et al., 2020). Consequently, the nurses in the tertiary hospital described in this paper will need time to integrate HIT into their daily practice, which may limit the amount of time they spend with patients initially. Therefore, despite proper selection of indicators by a team of clinical stakeholders and researchers, time gains at the bedside may not be immediately apparent as per management expectations.

The indicators will allow collection of useful data, however, further assessments are needed to determine whether the nursing practices, clinical behaviors, and care processes related to IBT monitors are optimal. The identification by stakeholders of aspects of nursing practices that need to be improved on could guide research into additional indicators.

Finally, we recognize that the three-step methodology proposed in this paper is resource-intensive in terms of staff and time available to conduct the necessary research, classification work, and discussions. The list of 45 indicators in Table 3 could serve as a starting point in the discussion process in settings with similar clinical needs but fewer research resources.

Limitations

An extensive literature review was conducted; however, it is possible that relevant research on the relationship between digital vital signs monitors, nursing practice, and patient outcomes may have been overlooked. Additionally, while stakeholders and researchers have been able to select high-performing indicators, further research is needed on the challenges nurses will face while interacting with the digital vital signs monitors. One of the challenges now for nurses is to integrate and make good use of health information technologies. As nurses are challenged to develop new technology-related skills (Burkoski, 2019; Saab et al., 2017), nurse leaders have a critical role to play in the continuous improvement of these monitors by supporting their development and design (Burkoski, 2019) in collaboration with HIT companies.

In addition, indicators retained in the case study are specific to the context of a particular hospital and, therefore, may not be generalizable to other care settings. This suggests once again the need for each healthcare institution to conduct its own validation process in the selection of appropriate indicators. As the critical mass of literature in the area grows, it may become possible to generate a set of indicators that are common to all care settings.

Conclusion

Through a collaborative approach involving key stakeholders and researchers, a set of indicators to assess the impact of integrated bedside monitors on nursing care and patient health outcomes in medical and surgical units of the study hospital was selected and validated. These endorsed indicators will provide important operational and clinical information that will inform timely decisions concerning the strategic advancement of HIT in nursing practice. The three-step methodology developed and applied in this case study may be helpful to other healthcare facilities.

References

Alam, N., Hobbelink, E. L., van Tienhoven, A. J., van de Ven, P. M., Jansma, E. P., & Nanayakkara, P. W. (2014). The impact of the use of the Early Warning Score (EWS) on patient outcomes: A systematic review. Resuscitation, 85(5), 587–594. https://doi.org/10.1016/j.resuscitation.2014.01.013

Arksey, H., & O’Malley, L. (2005). Scoping studies: Towards a methodological framework. International Journal of Social Research Methodology, 8(1), 19-32. https://doi.org/10.1080/1364557032000119616

Bellomo, R., Ackerman, M., Bailey, M., Beale, R., Clancy, G., Danesh, V., Hvarfner, A., Jimenez, E., Konrad, D., Lecardo, M., Pattee, K. S., Ritchie, J., Sherman, K., Tangkau, P., & the Vital Signs to Identify, Target, and Assess Level of Care Study (VITAL Care Study) Investigators. (2012). A controlled trial of electronic automated advisory vital signs monitoring in general hospital wards. Critical Care Medicine, 40(8), 2349–2361. https://doi.org/10.1097/CCM.0b013e318255d9a0

Bermúdez-Camps, I. B., Flores-Hernández, M. A., Aguilar-Rubio, Y., López-Orozco, M., Barajas-Esparza, L., Téllez López, A. M., García-Pérez, M. E., Fegadolli, C., & Reyes-Hernández, I. (2021). Design and validation of quality indicators for drugs dispensing in a pediatric hospital. Journal of the American Pharmacists Association, 61, e289–e300. https://doi.org/10.1016/j.japh.2021.02.018

Binder, C., Torres, R. E., & Elwell, D. (2021). Use of the Donabedian Model as a framework for COVID-19 response at a hospital in suburban Westchester County, New York: A facility-level case report. Journal of Emergency Nursing, 47(2), 239–255. https://doi.org/https://doi.org/10.1016/j.jen.2020.10.008

Brown, H., Terrence, J., Vasquez, P., Bates, D. W., & Zimlichman, E. (2014). Continuous monitoring in an inpatient medical-surgical unit: A controlled clinical trial. The American Journal of Medicine, 127(3), 226–232. https://doi.org/10.1016/j.amjmed.2013.12.004

Bukovszki, V., Apro, D., Khoja, A., Essig, N., & Reith, A. (2019). From assessment to implementation: Design considerations for scalable decision-support solutions in sustainable urban development. IOP Conference Series: Earth and Environmental Science, 290, 012112. https://doi.org/10.1088/1755-1315/290/1/012112

Burkoski, V. (2019). Nursing leadership in the fully digital practice realm. Journal of Nursing Leadership, 32(Special Issue), 9–15. https://doi.org/10.12927/cjnl.2019.25818

Burkoski, V., Yoon, J., Hall, T., Solomon, S., Gelmi, S., Fernandes, K., & Collins, B. E. (2019). Patient empowerment and nursing clinical workflows enhanced by Integrated Bedside Terminals. Nursing leadership, 32(SP), 42–57. https://doi.org/10.12927/cjnl.2019.25815

Cain, C., & Haque, S. (2008). Organizational workflow and its impact on work quality. In R. G. Hughes (Ed.), Patient safety and quality: An evidence-based handbook for nurses (pp. 2-217–2-244). Agency for Healthcare Research and Quality Publication No. 08-0043. https://www.ncbi.nlm.nih.gov/books/NBK2651/

Cardona-Morrell, M., Prgomet, M., Turner, R. M., Nicholson, M., & Hillman, K. (2016). Effectiveness of continuous or intermittent vital signs monitoring in preventing adverse events on general wards: A systematic review and meta-analysis. International Journal of Clinical Practice, 70(10), 806–824. https://doi.org/10.1111/ijcp.12846

Caron, I., Gélinas, C., Boileau, J., Frunchak, V., Casey, A., & Hurst, K. (2021). Initial testing of the use of the Safer Care Nursing Tool in a Canadian acute care context. Journal of Nursing Management, 29(6),1801–1808. https://doi.org/10.1111/jonm.13300

Chapman, S. M., Wray, J., Oulton, K., & Peters, M. J. (2016). Systematic review of paediatric track and trigger systems for hospitalised children. Resuscitation, 109, 87–109. https://doi.org/10.1016/j.resuscitation.2016.07.230

Chaudhry, B., Wang, J., Wu, S., Maglione, M., Mojica, W., Roth, E., Morton, S. C., & Shekelle, P. G. (2006). Systematic review: Impact of health information technology on quality, efficiency, and costs of medical care. Annals of Internal Medicine, 144(10), 742–752. https://doi.org/10.7326/0003-4819-144-10-200605160-00125

Cho, K.-J., Kwon, O., Kwon, J.-M., Lee, Y., Park, H., Jeon, K.-H., Kim, K. H., Park, J., & Oh, B.-H. (2020). Detecting patient deterioration using artificial intelligence in a rapid response system. Critical Care Medicine, 48(4), e285–e289. https://doi.org/10.1097/ccm.0000000000004236

Copnell, B., Hagger, V., Wilson, S. G., Evans, S. M., Sprivulis, P. C., & Cameron, P. A. (2009). Measuring the quality of hospital care: An inventory of indicators. Internal Medicine Journal, 39(6), 352–360. https://doi.org/10.1111/j.1445-5994.2009.01961.x

Coyle, Y., & Battles, J. (1999). Using antecedents of medical care to develop valid quality of care measures. International Journal for Quality in Health Care, 11(1), 5–12. https://doi.org/10.1093/intqhc/11.1.5

Davis, K., Drey, N., & Gould, D. (2009). What are scoping studies? A review of the nursing literature. International Journal of Nursing Studies, 46, 1386–1400. https://doi.org/10.1016/j.ijnurstu.2009.02.010

de Grooth, H. J., Girbes, A. R., & Loer, S. A. (2018). Early warning scores in the perioperative period: Applications and clinical operating characteristics. Current Opinion in Anesthesiology, 31(6), 732–738. https://doi.org/10.1097/ACO.0000000000000657

Donabedian, A. (2005). Evaluating the quality of medical care. Milbank Quarterly, 83, 691–729. https://doi.org/10.1111/j.1468-0009.2005.00397.x

Fang, A. H. S., Lim, W. T., & Balakrishnan, T. (2020). Early warning score validation methodologies and performance metrics: A systematic review. BMC Medical Informatics and Decision Making, 20(1), 111. https://doi.org/10.1186/s12911-020-01144-8

Gardner, G., Gardner, A., & O’Connell, J. (2014). Using the Donabedian framework to examine the quality and safety of nursing service innovation. Journal of Clinical Nursing, 23(1-2), 145–155. https://doi.org/10.1111/jocn.12146

Gerry, S., Bonnici, T., Birks, J., Kirtley, S., Virdee, P. S., Watkinson, P. J., & Collins, G. S. (2020). Early warning scores for detecting deterioration in adult hospital patients: Systematic review and critical appraisal of methodology. BMJ, 369, m1501. https://doi.org/10.1136/bmj.m1501

Helfand, M., Christensen, V., & Anderson, J. (2016). Technology assessment: EarlySense for monitoring vital signs in hospitalized patients (VA ESP Project #09-199). Evidence-based Synthesis Program. https://www.ncbi.nlm.nih.gov/books/NBK384615/

Hope, J., Griffiths, P., Schmidt, P. E., Recio?Saucedo, A., & Smith, G. B. (2019). Impact of using data from electronic protocols in nursing performance management: A qualitative interview study. Journal of Nursing Management, 27, 1682–1690. https://doi.org/10.1111/jonm.12858

Iroju, O., Soriyan, A., Gambo, I., & Olaleke, J. (2013). Interoperability in healthcare: Benefits, challenges and resolutions. International Journal of Innovation and Applied Studies, 3(1), 262–270. http://www.ijias.issr-journals.org/abstract.php?article=IJIAS-13-090-01

James, N., & Marwaha, S. (2020). Impact of single sign-on adoption in an assessment triage unit: A hospital’s journey to higher efficiency. The Journal of Nursing Administration, 50(3), 159–164. https://doi.org/10.1097/NNA.0000000000000860

Jardim, S. V. B. (2013). The electronic health record and its contribution to healthcare information systems interoperability. Procedia Technology, 9, 940–948. https://doi.org/10.1016/j.protcy.2013.12.105

Jennings, B. M., Staggers, N., & Brosch, L. R. (1999). A classification scheme for outcome indicators. Image: Journal of nursing scholarship, 31(4), 381–388. https://doi.org/10.1111/j.1547-5069.1999.tb00524.x

Kalankova, D., Kirwan, M., Bartonickova, D., Cubelo, F., Ziakova, K., & Kurucova, R. (2020). Missed, rationed or unfinished nursing care: A scoping review of patient outcomes. Journal of Nursing Management, 28(8), 1783-1797. https://doi.org/10.1111/jonm.12978

Koffel, J. B. (2015). Use of recommended search strategies in systematic reviews and the impact of librarian involvement: A cross-sectional survey of recent authors. PLoS One, 10(5), e0125931. https://doi.org/10.1371/journal.pone.0125931

Kramer, A. A., Sebat, F., & Lissauer, M. (2019). A review of early warning systems for prompt detection of patients at risk for clinical decline. Journal of Trauma & Acute Care Surgery, 87, S67–S73. https://doi.org/10.1097/ta.0000000000002197

Kyriacos, U., Jelsma, J., & Jordan, S. (2011). Monitoring vital signs using early warning scoring systems: A review of the literature. Journal of Nursing Management, 19(3), 311–330. https://doi.org/10.1111/j.1365-2834.2011.01246.x

Labella, B., De Blasi, R., Raho, V., Tozzi, Q., Caracci, G., & Klazinga Niek, S. (2021). Patient safety monitoring in acute care in a decentralized national health care system: Conceptual framework and initial set of actionable indicators. Journal of Patient Safety, 18(2), e480–e488. https://doi.org/10.1097/PTS.0000000000000851

Levac, D., Colquhoun, H., & O’Brien, K. K. (2010). Scoping studies: Advancing the methodology. Implementation Science, 5(69), 1–9. https://doi.org/10.1186/1748-5908-5-69

Liu, W., & Walsh, T. (2018). The impact of implementation of a clinically integrated problem-based neonatal electronic health record on documentation metrics, provider satisfaction, and hospital reimbursement: A quality improvement project. JMIR Medical Informatics, 6(2), e40. https://doi.org/10.2196/medinform.9776

MacDonald, G. (2011). Criteria for selection of high-performing indicators: A checklist to inform monitoring and evaluation. The Evaluation Center. https://www.betterevaluation.org/en/resources/tool/criteria_for_selection_of_high-performing_indicators

Mainz, J. (2003). Defining and classifying clinical indicators for quality improvement. International Journal for Quality in Health Care, 15(6), 523–530. https://doi.org/10.1093/intqhc/mzg081

Martinez, D. A., Kane, E. M., Jalalpour, M., Scheulen, J., Rupani, H., Toteja, R., Barbara, C., Bush, B., & Levin, S. R. (2018). An electronic dashboard to monitor patient flow at the Johns Hopkins Hospital: Communication of key performance indicators using the Donabedian Model. Journal of Medical Systems, 42(8), 133. https://doi.org/10.1007/s10916-018-0988-4

Mau, K. A., Fink, S., Hicks, B., Brookhouse, A., Flannery, A. M., & Siedlecki, S. L. (2019). Advanced technology leads to earlier intervention for clinical deterioration on medical/surgical units. Applied Nursing Research, 49, 1–4. https://doi.org/10.1016/j.apnr.2019.07.001

McCance, T. V. (2003). Caring in nursing practice: The development of a conceptual framework. Research and Theory for Nursing Practice, 17(2), 101–116. https://doi.org/10.1891/rtnp.17.2.101.53174

McKool, M., Freire, K., Basile, K., Jones, K., Klevens, J., DeGue, S., & Smith, S. (2020). A process for identifying indicators with public data: An example from sexual violence prevention. American Journal of Evaluation, 41(4), 510–528. https://doi.org/10.1177/1098214019891239

McNeill, G., & Bryden, D. (2013). Do either early warning systems or emergency response teams improve hospital patient survival? A systematic review. Resuscitation, 84(12), 1652–1667. https://doi.org/10.1016/j.resuscitation.2013.08.006

Mestrom, E., De Bie, A., van de Steeg, M., Driessen, M., Atallah, L., Bezemer, R., Bouwman, R. A., & Korsten, E. (2019). Implementation of an automated early warning scoring system in a surgical ward: Practical use and effects on patient outcomes. PLoS One, 14(5), e0213402. https://doi.org/10.1371/journal.pone.0213402

Montenegro, S. M. S. L., & Miranda, C. H. (2017). Evaluation of the performance of the modified early warning score in a Brazilian public hospital. Revista Brasileira Enfermagem, 72(6), 1428–1434. https://doi.org/10.1590/0034-7167-2017-0537

Moore, E. C., Tolley, C. L., Bates, D. W., & Slight, S. P. (2020). A systematic review of the impact of health information technology on nurses’ time. Journal of the American Medical Informatics Association, 27(5), 798–807. https://doi.org/10.1093/jamia/ocz231

Murphy, A., Cronin, J., Whelan, R., Drummond, F. J., Savage, E., & Hegarty, J. (2018). Economics of Early Warning Scores for identifying clinical deterioration-A systematic review. Irish Journal of Medical Science, 187(1), 193–205. https://doi.org/10.1007/s11845-017-1631-y

Patton, M. Q. (2008). Utilization-focused evaluation (4th ed.). Sage.

Peters, M., Godfrey, C., McInerney, P., Munn, Z., Tricco, A., & Khalil, H. (2020). Chapter 11: Scoping reviews. In E. Aromataris & Z. Munn (Eds.), JBI Manual for Evidence Synthesis. JBI. https://doi.org/10.46658/JBIMES-20-12

Petit, A., & Cambon, L. (2016). Exploratory study of the implications of research on the use of smart connected devices for prevention: A scoping review. BMC Public Health, 16, 552. https://doi.org/10.1186/s12889-016-3225-4

Piscotty, R. J., Jr., & Kalisch, B. (2014). The relationship between electronic nursing care reminders and missed nursing care. CIN: Computers, Informatics, Nursing, 32(10), 475–481. https://doi.org/10.1097/CIN.0000000000000092

Piscotty, R. J., Kalisch, B., & Gracey-Thomas, A. (2015). Impact of healthcare information technology on nursing practice. Journal of Nursing Scholarship, 47(4), 287–293. https://doi.org/10.1111/jnu.12138

Prgomet, M., Cardona-Morrell, M., Nicholson, M., Lake, R., Long, J., Westbrook, J., Braithwaite, J., & Hillman, K. (2016). Vital signs monitoring on general wards: Clinical staff perceptions of current practices and the planned introduction of continuous monitoring technology. International Journal for Quality in Health Care, 28(4), 515–521. https://doi.org/10.1093/intqhc/mzw062

Recio-Saucedo, A., Dall’Ora, C., Maruotti, A., Ball, J., Briggs, J., Meredith, P., Redfern, O. C., Kovacs, C., Prytherch, D., Smith, G. B., & Griffiths, P. (2018). What impact does nursing care left undone have on patient outcomes? Review of the literature. Journal of Clinical Nursing, 27(11-12), 2248–2259. https://doi.org/10.1111/jocn.14058

Redley, B., Douglas, T., & Botti, M. (2020). Methods used to examine technology in relation to the quality of nursing work in acute care: A systematic integrative review. Journal of Clinical Nursing, 29(9/10), 1477–1487. https://doi.org/10.1111/jocn.15213

Rethlefsen, M. L., Farrell, A. M., Osterhaus Trzasko, L. C., & Brigham, T. J. (2015). Librarian co-authors correlated with higher quality reported search strategies in general internal medicine systematic reviews. Journal of Clinical Epidemiology, 68(6), 617–626. https://doi.org/10.1016/j.jclinepi.2014.11.025

Rouleau, G., Gagnon, M.-P., Côté, J., Paynegagnon, J., Hudson, E., & Dubois, C.-A. (2016). How do information and communication technologies influence nursing care? Studies in Health Technology & Informatics, 225, 934–935. https://doi.org/10.3233/978-1-61499-658-3-934

Saab, M. M., McCarthy, B., Andrews, T., Savage, E., Drummond, F. J., Walshe, N., Forde, M., Breen, D., Henn, P., Drennan, J., & Hegarty, J. (2017). The effect of adult Early Warning Systems education on nurses’ knowledge, confidence and clinical performance: A systematic review. Journal of Advanced Nursing, 73(11), 2506–2521. https://doi.org/10.1111/jan.13322

Schall, M. C., Cullen, L., Pennathur, P., Chen, H., Burrell, K., & Matthews, G. (2017). Usability evaluation and implementation of a health information technology dashboard of evidence-based quality indicators. CIN: Computers, Informatics, Nursing, 35(6), 281–288. https://doi.org/10.1097/CIN.0000000000000325

Schang, L., Blotenberg, I., & Boywitt, D. (2021). What makes a good quality indicator set? A systematic review of criteria. International Journal for Quality in Health Care, 33(3), 1–10. https://doi.org/10.1093/intqhc/mzab107

Smith, G. B., Prytherch, D. R., Meredith, P., Schmidt, P. E., & Featherstone, P. I. (2013). The ability of the National Early Warning Score (NEWS) to discriminate patients at risk of early cardiac arrest, unanticipated intensive care unit admission, and death. Resuscitation, 84(4), 465–470. https://doi.org/10.1016/j.resuscitation.2012.12.016

Smith, L. B., Banner, L., Lozano, D., Olney, C. M., & Friedman, B. (2009). Connected care: Reducing errors through automated vital signs data upload. CIN: Computers, Informatics, Nursing, 27(5), 318–323. https://doi.org/10.1097/NCN.0b013e3181b21d65

Smith, M. E., Chiovaro, J. C., O’Neil, M., Kansagara, D., Quinones, A. R., Freeman, M., Motu’apuaka, M. L., & Slatore, C. G. (2014). Early warning system scores for clinical deterioration in hospitalized patients: A systematic review. Annals of the American Thoracic Society, 11(9), 1454–1465. https://doi.org/10.1513/AnnalsATS.201403-102OC

Tso Ying, L. (2018). The use of information technology to enhance patient safety and nursing efficiency. Studies in Health Technology & Informatics, 250, 192–192. https://doi.org/10.3233/978-1-61499-872-3-192

Van Velthoven, M. H., Adjei, F., Vavoulis, D., Wells, G., Brindley, D., & Kardos, A. (2019). ChroniSense National Early Warning Score Study (CHESS): A wearable wrist device to measure vital signs in hospitalised patients-protocol and study design. BMJ Open, 9(9), e028219. https://doi.org/10.1136/bmjopen-2018-028219

Wakefield, B. J. (2014). Facing up to the reality of missed care. BMJ Quality & Safety, 23(2), 92–94. https://doi.org/10.1136/bmjqs-2013-002489

Walker, R. M., Burmeister, E., Jeffrey, C., Birgan, S., Garrahy, E., Andrews, J., Hada, A., & Aitken, L. M. (2020). The impact of an integrated electronic health record on nurse time at the bedside: A pre-post continuous time and motion study. Collegian, 27(1), 63–74. https://doi.org/10.1016/j.colegn.2019.06.006

Wang, Z., Yang, Z., & Dong, T. (2017). A review of wearable technologies for elderly care that can accurately track indoor position, recognize physical activities and monitor vital signs in real time. Sensors (Basel), 17(2), 341. https://doi.org/10.3390/s17020341

Watson, A., Skipper, C., Steury, R., Walsh, H., & Levin, A. (2014). Inpatient nursing care and early warning scores: A workflow mismatch. Journal of Nursing Care Quality, 29(3), 215–222. https://doi.org/10.1097/ncq.0000000000000058

Westbrook, J. I., Duffield, C., Li, L., & Creswick, N. J. (2011). How much time do nurses have for patients? A longitudinal study quantifying hospital nurses’ patterns of task time distribution and interactions with health professionals. BMC Health Services Research, 11, 319. https://doi.org/10.1186/1472-6963-11-319

Whittemore, R., & Knafl, K. (2005). The integrative review: Updated methodology. Journal of Advanced Nursing, 52(5), 546–553. https://doi.org/10.1111/j.1365-2648.2005.03621.x

Xiao, S., Kidger, K., & Tourangeau, A. (2017). Nursing process health care indicators: A scoping review of development methods. Journal of Nursing Care Quality, 32(1), 32–39. https://doi.org/10.1097/NCQ.0000000000000207

Zheng, K., Haftel, H. M., Hirschl, R. B., Oreilly, M., & Hanauer, D. A. (2010). Quantifying the impact of health IT implementations on clinical workflow: A new methodological perspective. Journal of the American Medical Informatics Association, 17(4), 454–461. https://doi.org/10.1136/jamia.2010.004440

Author Biographies

Félix Prophète, DÉPA

Félix Prophète is the Administrative Process Specialist of the McGill Nursing Collaborative, Jewish General Hospital, CIUSSS (Integrated University Health and Social Services Centre) West-Central Montreal. He holds a DÉPA in Analysis and Management of Healthcare /Healthcare services from Université de Montréal.

Ève Bourbeau-Allard, MSI, MA

Ève Bourbeau-Allard is the Research Librarian at the Centre for Nursing Research, Jewish General Hospital, CIUSSS West-Central Montreal. She holds a Master’s in Information Science from the University of Michigan, Ann Arbor, MI, USA.

Marc-André Reid, RN, MSc(c)

Marc-André Reid is the Chief Nursing Information Officer, Department of Nursing, Jewish General Hospital, CIUSSS West-Central Montreal. He is a Master’s student in Nursing Sciences at Université de Montréal.

Isabelle Caron, MScN

Isabelle Caron is the Associate Director of Nursing of the CIUSSS West-Central Montreal. She holds a Master’s in Nursing from the Université de Montréal and has over 20 years of experience in nursing management.

Dr. Christina Clausen, RN, PhD

Dr. Clausen is the Coordinator of the McGill Nursing Collaborative at CIUSSS West-Central Montreal; a Faculty Lecturer at the Ingram School of Nursing, McGill University; and a Course Director for the Office of Interprofessional Education, McGill University. She holds a PhD in Nursing Administration from McGill University.

Dr. Margaret Purden, RN, PhD

Dr. Purden is the Scientific Director of the Centre for Nursing Research, Jewish General Hospital; an Associate Professor at the Ingram School of Nursing, McGill University; and the Director of the Office of Interprofessional Education, McGill University. She holds a PhD in Nursing from McGill University and has conducted considerable work in the areas of interprofessional education and nursing services, in addition to research in the field of cardiovascular nursing.

Acknowledgments: No acknowledgement to declare.

Disclosure statement: The authors report that there are no competing interests to declare.

Funding: This work was supported by the McGill Nursing Collaborative for Education and Innovation in Patient- and Family-Centered Care.

*Corresponding author

Félix Prophète

Centre for Nursing Research / McGill Nursing Collaborative, Jewish General Hospital

3755 ch. Côte-Sainte-Catherine | B-608

Montréal, QC, H3T 1E2